Mark Cartwright*, Ayanna Seals*, Justin Salamon*, Alex Williams^, Stefanie Mikloska^, Duncan MacConnell*, Edith Law^, Juan P. Bello*, and Oded Nov*

*New York University

^University of Waterloo

This page is a summary of the work presented in:

Abstract

Audio annotation is key to developing machine-listening systems; yet, effective ways to accurately and rapidly obtain crowdsourced audio annotations is understudied. In this work, we seek to quantify the reliability/redundancy trade-off in crowdsourced soundscape annotation, investigate how visualizations affect accuracy and efficiency, and characterize how performance varies as a function of audio characteristics. Using a controlled experiment, we varied sound visualizations and the complexity of soundscapes presented to human annotators. Results show that more complex audio scenes result in lower annotator agreement, and spectrogram visualizations are superior in producing higher quality annotations at lower cost of time and human labor. We also found recall is more affected than precision by soundscape complexity, and mistakes can be often attributed to certain sound event characteristics. These findings have implications not only for how we should design annotation tasks and interfaces for audio data, but also how we train and evaluate machine-listening systems.

Synthesized Soundscapes

To run an experiment in which we controlled the complexity of the soundscapes, we synthesized soundscapes using Scaper, a soundscape synthesis tool we developed. With Scaper we could control the sound class and distribution of events, which in turn enabled us to control the maximum polyphony and Gini polyphony, our two measures of soundscape complexity.

- Maximum polyphony describes the maximum number of overlapping sound events at any point in the soundscape.

- Gini polyphony measures the concentration of polyphony within a soundscape, allowing us to distinguish between soundscapes whose sound events are concentrated to a small period of time (e.g. a car crash) or distributed over a longer period of time (e.g. several idling engines at a stoplight).

- In our experiment, we grouped our soundscapes in two levels of Gini polyphony and three levels of maximum polyphony (1, 2, and 3-4 overlapping sound events), for a total of 6 complexity conditions. We synthesized 10 soundscapes for each of these conditions.

Every soundscape is 10 seconds long and has a background of Brownian noise resembling the typical “hum” often heard in urban environments. The soundscapes contained the following classes of sound events:

- car horn honking

- dog barking

- engine idling

- gun shooting

- jackhammer drilling

- music playing

- people shouting

- people talking

- siren wailing

All of the soundscapes used in our experiment are available in the Seeing Sound Dataset. Here are a sample of the soundscapes:

Gini polyphony level 0 / max polyphony level 0:

Gini polyphony level 1 / max polyphony level 0:

Gini polyphony level 0 / max polyphony level 1:

Gini polyphony level 1 / max polyphony level 1:

Gini polyphony level 0 / max polyphony level 2:

Gini polyphony level 1 / max polyphony level 2:

Sound Visualizations

In our experiment, we compared three common visualization strategies: waveform , spectrogram, and a no-visualization control strategy.

- Waveform visualizations are likely the most common sound visualization, and are typically used in audio/video recording, editing, and music player applications. They are two-dimensional visualizations in which the horizontal axis represents time, and the vertical axis represents the amplitude of the signal.

- Spectrograms visualizations are more commonly found in professional audio applications. They are time-frequency representations of a signal that are typically computed using the short-time Fourier transform (STFT). They are two-dimensional visualizations that represent three dimensions of data: the horizontal axis represents time, the vertical axis represents frequency, and the color of each time-frequency pair (i.e., a pixel at a particular time and frequency) indicates its magnitude.

An Web Interface for Audio Annotation

To run our experiment with crowdsourced participants, we developed a web-based audio annotation tool called the Audio Annotator, which also supports both waveform and spectrogram sound visualizations. This tool is now open source and available at https://github.com/CrowdCurio/audio-annotator

Experiment Design

To study the effects of sound visualization on soundscape complexity on annotation quality, our study followed a 3 x 3 x 2 between-subjects factorial experimental design (3 visualizations, 3 maximum polyphony levels, 2 Gini polyphony levels), with a total of 18 conditions. We recruited 30 participants from Amazon’s Mechanical Turk to complete each of the 18 conditions, for a total of 540 participants. All participants passed a hearing screening, watched a tutorial video, completed a practice audio annotation, completed 10 recorded audio annotations, and filled out surveys. For more details about the stimuli, experimental method, tools, please refer to the paper.

Results

Here we describe a few of the results of our analysis. For more results and details, please refer to the paper.

We investigated how the aggregate annotation quality improved as we increased the number of participants and found that there was little benefit of having more than 16 annotators:

We investigated both the quality and speed of annotations by calculating the integrals of annotation quality metrics over the first five minutes of each annotation task. We found that participants with spectrogram visualization aids produce higher quality annotations in less time than with waveform visualizations or without a sound visualization aid. Therefore, spectrograms seem to be more effective visualizations for audio annotation.

We investigated the presence of learning effects in audio annotation by looking at how annotation quality improved as participants gained experience over the course of the 10 annotation tasks they completed. We found that all participants increased their recall performance over time, but only participants aided with the spectrogram visualization seem to exhibit a trend in which precision performance increased over time. This implies that one should expect annotation quality to improve as annotators gain experience, and this improvement may be even stronger when participants are aided with spectrogram visualizations. Since the spectrogram visualizations seem to be the most effective visualization aid, we limited this and subsequent analysis to annotations using spectrograms.

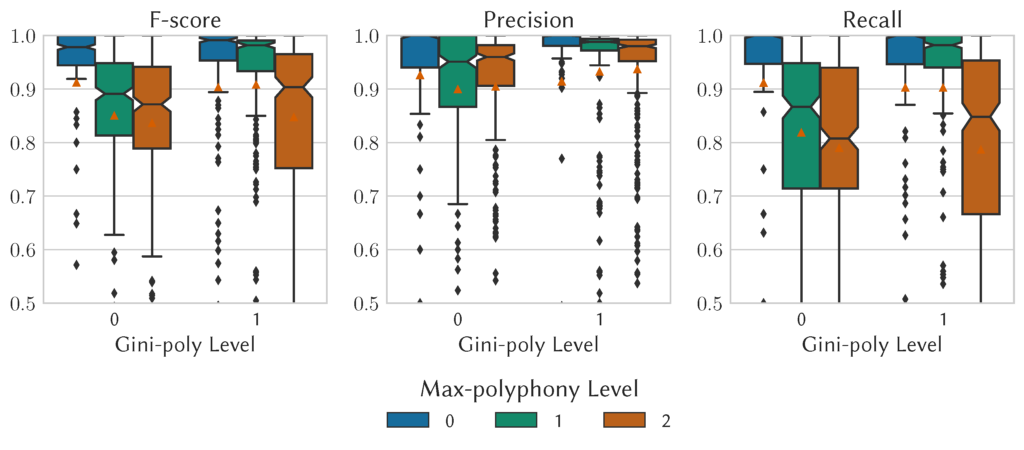

To investigate the effect of soundscape complexity on annotation quality, we broke the annotation quality metrics down by the soundscape complexity levels and found that as maximum polyphony increased, recall decreased significantly, but precision decreased only slightly. This implies that even if annotators are given complex soundscapes, the resulting annotations will be precise even if some sound event annotations are missing. The results in regards to Gini-polyphony are less clear—for more details see the paper.

In our analysis of annotation quality we found that quality varied with respect to sound class. To investigate this effect, we looked at how the onset and offsets of crowdsourced annotations deviated from the ground-truth annotations by sound class (i.e. estimate – ground-truth). We found that some classes have deviations with typically large biases (e.g. gun shooting) and large variances (e.g. dog barking and people talking). With so few classes, it’s hard to generalize these results, but it’s possible that these effects are the result of sound classes which are more affected by perceptual masking due to having more dynamic amplitude envelopes.

Practical Implications

The findings have practical implications for the design and operation of crowdsourcing-based audio annotation systems:

- When investigating the redundancy/reliability trade-off in the number of annotators, we found that the value of additional annotators decreased after 5–10 annotators and that 16 annotators captured 90% of the gain in annotation quality. However, when resources are limited and cost is a concern, our findings suggest that five annotators may be a reasonable choice for reliable annotation with respect to the trade-off between cost and quality.

- When investigating the effect of sound visualizations on annotation quality, the results demonstrate that given enough time, all three visualization aids enable annotators to identify sound events with similar recall, but that the spectrogram visualization enables annotators to identify sounds more quickly. We speculate this may be because annotators are able to more easily identify visual patterns in the spectrogram, which in turn enables them to identify sound events more quickly. We also see that participants learn how to use each interface more effectively over time, suggesting that we can expect higher quality annotations with even a small amount of additional training. From this experiment, we do not see any benefit of using the waveform or no-visualization aids when participants are adequately trained on the visualization.

- When examining the effect of soundscape characteristics on annotation quality, we found that while there are interactions between our two complexity measures and their effect on quality, they both affected recall more than precision. This is an encouraging result since it implies that when collecting annotations for complex scenes, we can trust the precision of the labels that we do obtain even if the annotations are incomplete. This also indicates that when training and evaluating machine listening systems, type II errors should possibly be weighted less than type I errors if the ground-truth was annotated by humans and vice versa if the ground-truth was annotated by a synthesis engine such as Scaper.

- We found substantial sound-class effects on annotation quality. In particular, for certain sound classes we found a discrepancy between the perceived onset and offset times when a sound is in a mixture and when a sound is in isolation (our ground-truth annotations). We speculate this effect is caused by long attack and decay times for certain sound classes (e.g., “gun shooting”) and that the effect may also be present in other classes exhibiting similar characteristics (e.g., “car passing”), which were not tested. Future research focusing on specific sound classes would be needed to examine such sound class effects rigorously. For crowdsourcing systems’ design and operation purposes, these discrepancies could be accounted for in the training and evaluation of machine listening systems by having class-dependent weights for type II errors.

- Class-dependent errors also seemed to be a result of class confusions and missed detection of events. We speculate that one possible solution to mitigate confusion errors would be to provide example recordings of sound classes to which annotators could refer while annotating.

Data

We’ve released a dataset called the Seeing Sound Dataset which contains all soundscapes, final annotations, and participant demographics:

Tools

The following tools were used to produce this work:

Acknowledgments

This work was partially supported by National Science Foundation award 1544753. This work also would not be possible without the participation and effort of many workers on Amazon’s Mechanical Turk platform.