Overview

For the midterm project, I developed an interactive two player combat battle game with a “Ninjia” theme that allows players to use their body movement to control the actions of the character and make certain gestures to trigger the moves and skills of the characters in the game to battle against each other. This game is called “Battle Like a Ninjia”.

Background

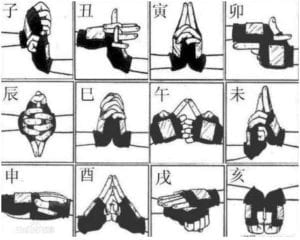

My idea for this form of interaction between the players and the game that involves using players’ physical body movement to trigger the characters in the game to release different skills is to a large extent inspired by the cartoon “Naruto”. In Naruto, most of the Ninjas need to do a series of hand gestures before they launch their powerful Ninjitsu skills. However, in most of the existing battle games of Naruto today, players launch the character’s skills simply by pushing different button on joystick. Thus, in my project, I want to put more emphasis on all the body preparations these characters do in the animation before they release their skills by having the players pose different body gestures to trigger different moves of the character in the game. Google’s pixel 4 that features with hand-gesture interaction also inspires me.

Motivation

I’ve always found that in most of the games today, the physical interaction between players and games is limited. Even though with the development of VR and physical computing technology, more games like “Beat Saber” and “Just Dance” are coming up, still, the number of video games that can give people the feeling of physical involvement is limited. Thus, I think it will be fun to explore more possibilities of diversifying the ways of the interaction between the game and players by riding of the keyboards and joysticks and having the players to use their body to control a battle game.

Methodology

In order to track the movement of the players’ body and use them as input to trigger the game, I utilized the PoseNet model to get the coordination of each part of the player’s body. I first constructed the conditions each body part’s coordination needs to meet to trigger the actions of the characters. I started by documenting the coordination of certain body part when a specific body gesture is posed. I then set a range for the coordination and coded that when these body parts’ coordinations are all in the range, a move of the characters in the screen can be triggered. By doing so, I “trained” the program to recognize the body gesture of the player by comparing the coordination of the players’ body part with the pre-coded coordination needed to trigger the game. For instance, in the first picture below, when the player poses her hand together and made a specific hand sign like the one Naruto does in the animation before he releases a skill, the Naruto figure in the game will do the same thing and release the skill. However, what the program recognize is actually not the hand gesture of the player, but the correct coordination of the player’s wrists and elbows. When the Y coordination of both the left and right wrists and elbows of the player is the same, the program is able to recognize that and gives an output.

Experiments

Originally, I wanted to use a hand-tracking model to train a hand gesture recognition model that is able to recognize hand gestures and alter the moves of the character in the game accordingly. However, I later found that PoseNet can fulfill the goal I wanted just fine and even better. So I ended up just using the PoseNet. Even though it’s sometimes less stable than I hope, it makes more using diverse body movement as input possible.During the process of building this game, I encountered many difficulties. I tried using the coordination of ankles to make the game react to the players’ feet movement. However due to the position of the web cam, it’s very difficult for the webcam to get the look of the players’ ankle. The player would need to stand very far from the screen, which prevents them from seeing the game. Even if the model got the coordination of the ankles, the numbers are still very inaccurate. The PoseNet model also proves to be not very good at telling right wrist from right wrist. At first I wanted the model to recognize when the right hand of the player was held high and then make the character go right. However, I found that when there is only one hand on the screen the model is not able to tell right from left so I have to programmed it to recognize that when the right wrist of the player is higher than his left wrist, the character needs to go right.

Social Impact

This project is not only an entertainment game, but also a new approach to apply the technology of machine learning in the process of designing interactive game. I hope this project can not only bring joys to the players, but also show that the interaction between game and players is not limited by keyboards or joysticks. By using the PoseNet model in my project, I hope this project allows people to see the great potential the machine learning technology can bring to game design in terms of diversifying the interaction between players and games, and also raise their interest in learning more about the machine learning technology through a fun and interactive game. Even though today most of games still focus on the application of joysticks, mouse, or keyboards, which is not necessarily a bad thing, I still hope that in the future with the help of machine learning technology, more and more innovative way to interact with games will become possible. I hope people can find inspiration from my project.

Further Development

If given more time, I will first improve the interface of the game. Since it has brought to my attention during user test that many players often forgot the gestures they need to do to trigger the character’s skill. Thus, I might need to include an instruction page on the web. In addition, I will also try to make more moves available to react to player’s gesture to make this game more fun. I was also advised to create more characters that players can choose from which character to choose to use. So perhaps in the final version of this game, I will apply a style transfer model and ask the model to generate different character and battle scene to diversify the players’ choice.