This post explains how and why we designed Groove Pizza.

What it does

The Groove Pizza represents beats as concentric rhythm necklaces. The circle represents one measure. Each slice of the pizza is a sixteenth note. The outermost ring controls the kick drum; the middle one controls the snare; and the innermost one plays cymbals.

Connecting the dots on a given ring creates shapes, like the square formed by the snare drum in the pattern below.

The pizza can play time signatures other than 4/4 by changing the number of slices. Here’s a twelve-slice pizza playing an African bell pattern.

You can explore the geometry of musical rhythm by dragging shapes onto the circular grid. Patterns that are visually appealing tend to sound good, and patterns that sound good tend to look cool.

Herbie Hancock did some user testing for us, and he suggested that we make it possible to show the interior angles of the shapes.

Groove Pizza History

The ideas behind the Groove Pizza began in my masters thesis work in 2013 at NYU. For his NYU senior thesis, Adam November built web and physical prototypes. In late summer 2015, Adam wrote what would become the Groove Pizza 1.0 (GP1), with a library of drum patterns that he and I curated. The MusEDLab has been user testing this version for the past year, both with kids and with music and math educators in New York City.

In January 2016, the Music Experience Design Lab began developing the Groove Pizza 2.0 (GP2) as part of the MathScienceMusic initiative.

MathScienceMusic Groove Pizza Credits:

- Original Ideas: Ethan Hein, Adam November & Alex Ruthmann

- Design: Diana Castro

- Software Architect: Kevin Irlen

- Creative Code Guru: Matthew Kaney

- Backend Code Guru: Seth Hillinger

- Play Testing: Marijke Jorritsma, Angela Lau, Harshini Karunaratne, Matt McLean

- Odds & Ends: Asyrique Thevendran, Jamie Ehrenfeld, Jason Sigal

The learning opportunity

The goals of the Groove Pizza are to help novice drummers and drum programmers get started; to create a gentler introduction to beatmaking with more complex tools like Logic or Ableton Live; and to use music to open windows into math and geometry. The Groove Pizza is intended to be simple enough to be learned easily without prior experience or formal training, but it must also have sufficient depth to teach substantial and transferable skills and concepts, including:

- Familiarity with the component instruments in a drum beat and the ability to pick them individually out of the sound mass.

- A repertoire of standard patterns and rhythmic motifs. Understanding of where to place the kick, snare, hi-hats and so on to produce satisfying beats.

- Awareness of different genres and styles and how they are distinguished by their different degrees of syncopation, customary kick drum patterns and claves, tempo ranges and so on.

- An intuitive understanding of the difference between strong and weak beats and the emotional effect of syncopation.

- Acquaintance with the concept of hemiola and other more complex rhythmic devices.

Marshall (2010) recommends “folding musical analysis into musical experience.” Programming drums in pop and dance idioms makes the rhythmic abstractions concrete.

Visualizing rhythm

Western music notation is fairly intuitive on the pitch axis, where height on the staff corresponds clearly to pitch height. On the time axis, however, Western notation is less easily parsed—horizontal space need not have any bearing at all on time values. A popular alternative is the “time-unit box system,” a kind of rhythm tablature used by ethnomusicologists. In a time-unit box system, each pulse is represented by a square. Rhythmic onsets are shown as filled boxes.

Nearly all electronic music production interfaces use the time-unit box system scheme, including grid sequencers and the MIDI piano roll.

A row of time-unit boxes can also be wrapped in a circle to form a rhythm necklace. The Groove Pizza is simply a set of rhythm necklaces arranged concentrically.

Circular rhythm visualization offers a significant advantage over linear notation: it more clearly shows metrical function. We can define meter as “the grouping of perceived beats or pulses into equivalence classes” (Forth, Wiggin & McLean, 2010, 521). Linear musical concepts like small-scale melodies depend mostly on relationships between adjacent events, or at least closely spaced events. But periodicity and meter depend on relationships between nonadjacent events. Linear representations of music do not show meter directly. Simply by looking at the page, there is no indication that the first and third beats of a measure of 4/4 time are functionally related, as are the second and fourth beats.

However, when we wrap the musical timeline into a circle, meter becomes much easier to parse. Pairs of metrically related beats are directly opposite one another on the circle. Rotational and reflectional symmetries give strong clues to metrical function generally. For example, this illustration of 2-3 son clave adapted from Barth (2011) shows an axis of reflective symmetry between the fourth and twelfth beats of the pattern. This symmetry is considerably less obvious when viewed in more conventional notation.

The Groove Pizza adds a layer of dynamic interaction to circular representation. Users can change time signatures during playback by adding or removing slices. In this way, very complex metrical shifts can be performed by complete novices. Furthermore, each rhythm necklace can be rotated during playback, enabling a rhythmic modularity characteristic of the most sophisticated Afro-Latin and jazz rhythms. Exploring rotational rhythmic transformation typically requires very sophisticated music-reading and performance skills to understand and execute, but doing so is effortlessly accessible to Groove Pizza users.

Visualizing swing

We traditionally associate swing with jazz, but it is omnipresent in American vernacular music: in rock, country, funk, reggae, hip-hop, EDM, and so on. For that reason, swing is a standard feature of notation software, MIDI sequencers, and drum machines. However, while swing is crucial to rhythmic expressiveness, it is rarely visualized in any explicit way, in notation or in software interfaces. Sequencers will sometimes show swing by displacing events on the MIDI piano roll, but the user must place those events first. The grid itself generally does not show swing.

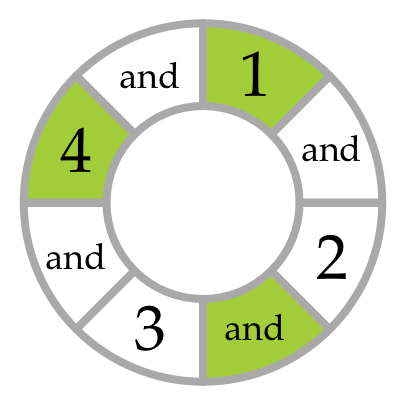

The Groove Pizza uses a novel (and to our knowledge unprecedented) graphical representation of swing on the background grid, not just on the musical events. The slices alternately expand and contract in width according to the amount of swing specified. At 0% swing, the wedges are all of uniform width. At 50% swing, the odd-numbered slice in each pair is twice as long as the following even-numbered slice. As the user adjusts the swing slider, the slices dynamically change their width accordingly.

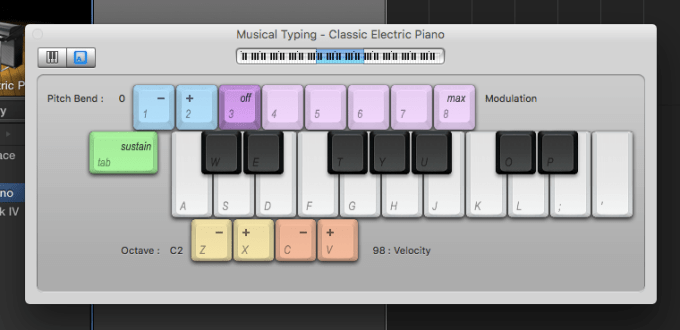

Our swing visualization system also addresses the issue of whether swing should be applied to eighth notes or sixteenths. In the jazz era, swing was understood to apply to eighth notes. However, since the 1960s, swing is more commonly applied to sixteenth notes, reflecting a broader shift from eighth note to sixteenth note pulse in American vernacular music. To hear the difference, compare the swung eighth note pulse of “Rockin’ Robin” by Bobby Day (1958) with the sixteenth note pulse of “I Want You Back” by the Jackson Five (1969). Electronic music production tools like Ableton Live and Logic default to sixteenth-note swing. However, notation programs like Sibelius, Finale and Noteflight can only apply swing to eighth notes.

The Groove Pizza supports both eighth and sixteenth swing simply by changing the slice labeling. The default labeling scheme is agnostic, simply numbering the slices sequentially from one. In GP1, users can choose to label a sixteen-slice pizza either as one measure of sixteenth notes or two measures of eighth notes. The grid looks the same either way; only the labels change.

Drum kits

With one drum sound per ring, the number of sounds available to the user is limited by the number of rings that can reasonably fit on the screen. In my thesis prototype, we were able to accommodate six sounds per “drum kit.” GP1 was reduced to five rings, and GP2 has only three rings, prioritizing simplicity over musical versatility.

GP1 offers three drum kits: Acoustic, Hip-Hop, and Techno. The Acoustic kit uses samples of a real drum kit; the Hip-Hop kit uses samples of the Roland TR-808 drum machine; and the Techno kit uses samples of the Roland TR-909. GP2 adds two additional kits: Jazz (an acoustic drum kit played with brushes), and Afro-Latin (congas, bell, and shaker.) Preset patterns automatically load with specific kits selected, but the user is free to change kits after loading.

In GP1, sounds can be mixed and matched at wiell, so the user can, for example, combine the acoustic kick with the hip-hop snare. In GP2, kits cannot be customized. A wider variety of sounds would present a wider variety of sonic choices. However, placing strict limits on the sounds available has its own creative advantage: it eliminates option paralysis and forces users to concentrate on creating interesting patterns, rather than struggling to choose from a long list of sounds.

It became clear in the course of testing that open and closed hi-hats need not operate separate rings, since it is not desirable to ever have them sound at the same time. (While drum machines are not bound by the physical limitations of human drummers, our rhythmic traditions are.) In future versions of the GP, we plan to place closed and open hi-hats together on the same ring. Clicking a beat in the hi-hat ring will place a closed hi-hat; clicking it again will replace it with an open hi-hat; and a third click will return the beat to silence. We will use the same mechanic to toggle between high and low cowbells or congas.

Preset patterns

In keeping with the constructivist value of working with authentic cultural materials, the exercises in the Groove Pizza are based on rhythms drawn from actual music. Most of the patterns are breakbeats—drums and percussion sampled from funk, rock and soul recordings that have been widely repurposed in electronic dance and hip-hop music. There are also generic rock, pop and dance rhythms, as well as an assortment of traditional Afro-Cuban patterns.

The GP1 offers a broad selection of preset patterns. The GP2 uses a smaller subset of these presets.

Breakbeats

- The Winstons, ”Amen, Brother” (1969)

- James Brown, ”Cold Sweat” (1967)”

- James Brown, “The Funky Drummer” (1970)

- Bobby Byrd, “I Know You Got Soul” (1971)

- The Honeydrippers, “Impeach The President” (1973)

- Skull Snaps, “It’s A New Day” (1973)

- Joe Tex, ”Papa Was Too” (1966)

- Stevie Wonder, “Superstition” (1972)

- Melvin Bliss, “Synthetic Substitution”(1973)

Afro-Cuban

- Bembé—also known as the “standard bell pattern”

- Rumba clave

- Son clave (3-2)

- Son clave (2-3)

Pop

- Michael Jackson, ”Billie Jean” (1982)

- Boots-n-cats—a prototypical disco pattern, e.g. “Funkytown” by Lipps Inc (1979)

- INXS, “Need You Tonight” (1987)

- Uhnntsss—the standard “four on the floor” pattern common to disco and electronic dance music

Hip-hop

- Lil Mama, “Lip Gloss” (2008)

- Nas, “Nas Is Like” (1999)

- Digable Planets, “Rebirth Of Slick (Cool Like Dat)” (1993)

- OutKast, “So Fresh, So Clean” (2000)

- Audio Two, “Top Billin’” (1987)

Rock

- Pink Floyd, ”Money” (1973)

- Peter Gabriel, “Solisbury Hill” (1977)

- Billy Squier, “The Big Beat” (1980)

- Aerosmith, “Walk This Way” (1975)

- Queen, “We Will Rock You” (1977)

- Led Zeppelin, “When The Levee Breaks” (1971)

Jazz

- Bossa nova, e.g. “The Girl From Ipanima” by Antônio Carlos Jobim (1964)

- Herbie Hancock, ”Chameleon” (1973)

- Miles Davis, ”It’s About That Time” (1969)

- Jazz spang-a-lang—the standard swing ride cymbal pattern

- Jazz waltz—e.g. “My Favorite Things” as performed by John Coltrane (1961)

- Dizzy Gillespie, ”Manteca” (1947)

- Horace Silver, ”Song For My Father” (1965)

- Paul Desmond, ”Take Five” (1959)

- Herbie Hancock, “Watermelon Man” (1973)

Mathematical applications

The most substantial new feature of GP2 is “shapes mode.” The user can drag shapes onto the grid and rotate them to create geometric drum patterns: triangle, square, pentagon, hexagon, and octagon. Placing shapes in this way creates maximally even rhythms that are nearly always musically satisfying (Toussaint 2011). For example, on a sixteen-slice pizza, the pentagon forms rumba or bossa nova clave, while the hexagon creates a tresillo rhythm. As a general matter, the way that a rhythm “looks” gives insight into the way it sounds, and vice versa.

Because of the way it uses circle geometry, the Groove Pizza can be used to teach or reinforce the following subjects:

- Fractions

- Ratios and proportional relationships

- Angles

- Polar vs Cartesian coordinates

- Symmetry: rotations, reflections

- Frequency vs duration

- Modular arithmetic

- The unit circle in the complex plane

Specific kinds of music can help to introduce specific mathematical concepts. For example, Afro-Cuban patterns and other grooves built on hemiola are useful for graphically illustrating the concept of least common multiples. When presented with a kick playing every four slices and a snare playing every three slices, a student can both see and hear how they will line up every twelve slices. Bamberger and diSessa (2003) describe the “aha” moment that students have when they grasp this concept in a music context. One student in their study is quoted as describing the twelve-beat cycle “pulling” the other two beats together. Once students grasp least common multiples in a musical context, they have a valuable new inroad into a variety of scientific and mathematical concepts: harmonics in sound analysis, gears, pendulums, tiling patterns, and much else.

In addition to eighth and sixteenth notes, GP1 users can also label the pizza slices as fractions or angles, both Cartesian and polar. Users can thereby describe musical concepts in mathematical terms, and vice versa. It is an intriguing coincidence that the polar angle π/16 represents a sixteenth note. One could go even further with polar mode and use it as the unit circle on the complex plane. From there, lessons could move into powers of e, the relationship between sine and cosine waves, and other more advanced topics. The Groove Pizza could thereby be used to lay the ground work for concepts in electrical engineering, signal processing, and anything else involving wave mechanics.

Future work

The Groove Pizza does not offer any tone controls like duration, pitch, EQ and the like. This choice was due to a combination of expediency and the push to reduce option paralysis. However, velocity (loudness) control is a high-priority future feature. While nuanced velocity control is not necessary for the artificial aesthetic of electronic dance music, a basic loud/medium/soft toggle would make the Groove Pizza a more versatile tool.

The next step beyond preset patterns is to offer drum programming exercises or challenges. In exercises, users are presented with a pattern. They may alter this pattern as they see fit by adding and removing drum hits, and by rotating instrument parts within their respective rings. There are restraints of various kinds, to ensure that the results are appealing and musical-sounding. The restraints are tighter for more basic exercises, and looser for more advanced ones. For example, we might present users with a locked four-on-the-floor kick pattern, and ask them to create a satisfying techno beat using the snares and hi-hats. We also plan to create game-like challenges, where users are given the sound of a beat and must figure out how to represent it on the circular grid.

The Groove Pizza would be more useful for the purposes of trigonometry and circle geometry if it were presented slightly differently. Presently, the first beat of each pattern is at twelve o’clock, with playback running clockwise. However, angles are usually representing as originating at three o’clock and increasing in a counterclockwise direction. To create “math mode,” the radial grid would need to be reflected left-to-right and rotated ninety degrees.

References

Ankney, K.L. (2012). Alternative representations for musical composition. Visions of Research in Music Education, 20.

Bamberger, J., & DiSessa, A. (2003). Music As Embodied Mathematics: A Study Of A Mutually Informing Affinity. International Journal of Computers for Mathematical Learning, 8(2), 123–160.

Bamberger, J. (1996). Turning Music Theory On Its Ear. International Journal of Computers for Mathematical Learning, 1: 33-55.

Bamberger, J. (1994). Developing Musical Structures: Going Beyond the Simples. In R. Atlas & M. Cherlin (Eds.), Musical Transformation and Musical Intuition. Ovenbird Press.

Barth, E. (2011). Geometry of Music. In Greenwald, S. and Thomley, J., eds., Essays in Encyclopedia of Mathematics and Society. Ipswich, MA: Salem Press.

Bell, A. (2013). Oblivious Trailblazers: Case Studies of the Role of Recording Technology in the Music-Making Processes of Amateur Home Studio Users. Doctoral dissertation, New York University.

Benadon, F. (2007). A Circular Plot for Rhythm Visualization and Analysis. Music Theory Online, Volume 13, Issue 3.

Demaine, E.; Gomez-Martin, F.; Meijer, H.; Rappaport, D.; Taslakian, P.; Toussaint, G.; Winograd, T.; & Wood, D. (2009). The Distance Geometry of Music. Computational Geometry 42, 429–454.

Forth, J.; Wiggin, G.; & McLean, A. (2010). Unifying Conceptual Spaces: Concept Formation in Musical Creative Systems. Minds & Machines, 20:503–532.

Magnusson, T. (2010). Designing Constraints: Composing and Performing with Digital Musical Systems. Computer Music Journal, Volume 34, Number 4, pp. 62 – 73.

Marrington, M. (2011). Experiencing Musical Composition In The DAW: The Software Interface As Mediator Of The Musical Idea. The Journal on the Art of Record Production, (5).

Marshall, W. (2010). Mashup Poetics as Pedagogical Practice. In Biamonte, N., ed. Pop-Culture Pedagogy in the Music Classroom: Teaching Tools from American Idol to YouTube. Lanham, MD: Scarecrow Press.

McClary, S. (2004). Rap, Minimalism and Structures of Time in Late Twentieth-Century Culture. In Warner, D. ed., Audio Culture. London: Continuum International Publishing Group.

Monson, I. (1999). Riffs, Repetition, and Theories of Globalization. Ethnomusicology, Vol. 43, No. 1, 31-65.

New York State Learning Standards and Core Curriculum — Mathematics

Ruthmann, A. (2012). Engaging Adolescents with Music and Technology. In Burton, S. (Ed.). Engaging Musical Practices: A Sourcebook for Middle School General Music. Lanham, MD: R&L Education.

Thibeault, M. (2011). Wisdom for Music Education from the Recording Studio. General Music Today, 20 October 2011.

Thompson, P. (2012). An Empirical Study Into the Learning Practices and Enculturation of DJs, Turntablists, Hip-Hop and Dance Music Producers.” Journal of Music, Technology & Education, Volume 5, Number 1, 43 – 58.

Toussaint, G. (2013). The Geometry of Musical Rhythm. Cleveland: Chapman and Hall/CRC.

____ (2005). The Euclidean algorithm generates traditional musical rhythms. Proceedings of BRIDGES: Mathematical Connections in Art, Music, and Science, Banff, Alberta, Canada, July 31 to August 3, 2005, pp. 47-56.

____ (2004). A comparison of rhythmic similarity measures. Proceedings of ISMIR 2004: 5th International Conference on Music Information Retrieval, Universitat Pompeu Fabra, Barcelona, Spain, October 10-14, 2004, pp. 242-245.

____ (2003). Classification and phylogenetic analysis of African ternary rhythm timelines. Proceedings of BRIDGES: Mathematical Connections in Art, Music, and Science, University of Granada, Granada, Spain July 23-27, 2003, pp. 25-36.

____ (2002). A mathematical analysis of African, Brazilian, and Cuban clave rhythms. Proceedings of BRIDGES: Mathematical Connections in Art, Music and Science, Townson University, Towson, MD, July 27-29, 2002, pp. 157-168.

Whosampled.com. “The 10 Most Sampled Breakbeats of All Time.”

Wiggins, J. (2001). Teaching for musical understanding. Rochester, Michigan: Center for Applied Research in Musical Understanding, Oakland University.

Wilkie, K.; Holland, S.; & Mulholland, P. (2010). What Can the Language of Musicians Tell Us about Music Interaction Design?” Computer Music Journal, Vol. 34, No. 4, 34-48.